The Hidden Thinking Tax of AI: How to Stay Cognitively Sharp

Tambellini Author

AI tools are changing how we work. Left unchecked, they’ll change how we think too.

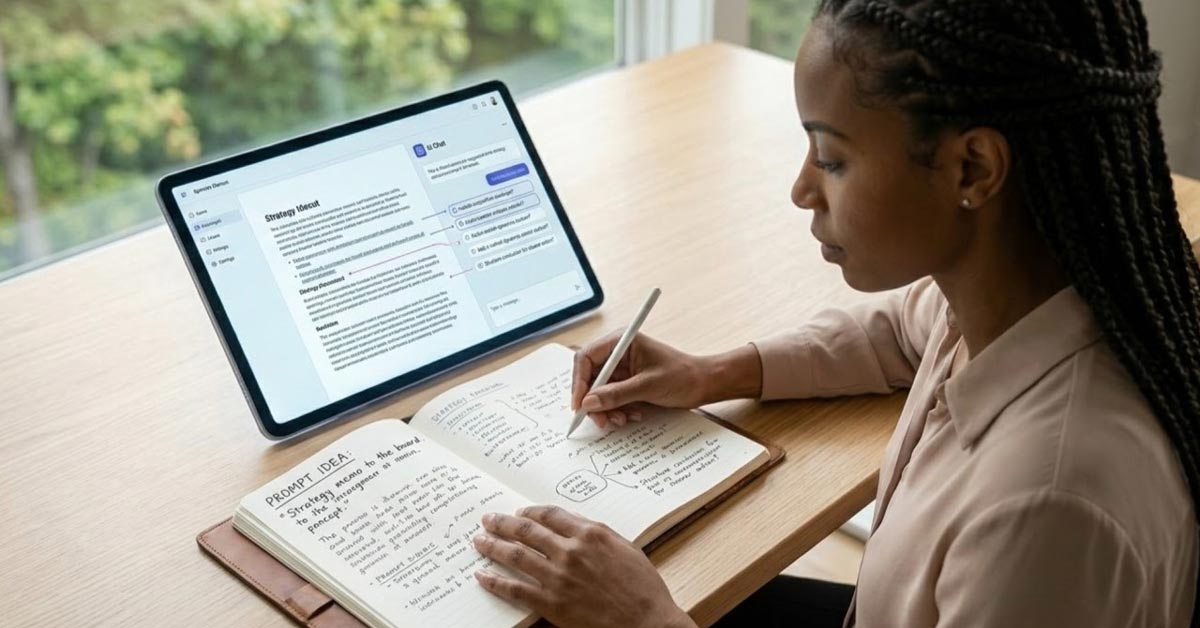

You’re staring at a blank document. A strategy memo is due by the end of the day.

Instead of wrestling with the problem, sketching out arguments, weighing the trade-offs, you open your AI assistant and type: “Write a strategic memo about X.”

Thirty seconds later, you have a polished draft.

You tweak a few sentences, hit send, and move on. Thirty seconds of prompting replaces an hour of thinking.

It felt productive. But did you actually think?

This scenario plays out millions of times a day across knowledge work. AI tools have become default collaborators for writing, analysis, and decision-making, and for good reason. They’re good at what they do. But the way most of us use these tools carries a hidden cost, one that compounds quietly over time.

The Cognitive Offloading Problem

Cognitive offloading is the technical term for using external tools to reduce mental effort. We’ve been doing it since we started writing things down. But what AI enables is different in kind from a notebook or a calculator.

AI changes the equation.

In 2011, researchers Betsy Sparrow, Jenny Liu, and Daniel Wegner published a study in Science that introduced what became known as the “Google Effect.” They found that when people expected information to remain available online, they were less likely to commit it to memory. We didn’t stop remembering entirely. We shifted what we remembered, favoring where to find information over the information itself.

That was just search.

AI goes further. We’re no longer outsourcing memory alone. We’re outsourcing reasoning, synthesis, and judgment. When an AI drafts your analysis, structures your argument, or suggests your conclusion, the cognitive work that once built your analytical ability is being done by something else.

A 2025 study published in Societies by Michael Gerlich looked at this directly. Across 666 participants, frequent AI tool usage correlated negatively with critical thinking skills, and the relationship ran through cognitive offloading. Younger participants, who tended to use AI tools most, also scored lowest on critical thinking assessments.

Why Knowledge Workers Should Pay Attention

If your job involves physical work, better tools amplify your output. If your job involves thinking, the relationship between tools and skill is more complicated.

Knowledge workers get paid to synthesize information, assess quality, work through ambiguity, and make calls under uncertainty. These are exactly the functions that AI makes it easiest to bypass.

When you ask an AI to draft a client recommendation before forming your own view, you’re not just saving time. You’re skipping the work that builds strategic judgment in the first place. When you accept AI-generated analysis without questioning the assumptions behind it, you’re letting that judgment weaken.

This isn’t an argument against the tools. It’s a recognition that passive use of them has consequences.

AI Is Genuinely Useful. That’s Not the Question.

As someone who builds and deploys AI systems, I use these tools every day. Coding agents, research assistants, workflow automation. They are genuinely powerful.

AI tools speed up research. They help get past writer’s block. They spot patterns in data that would take you hours to find manually. They handle the repetitive stuff, like summarizing meeting notes or formatting reports, so you can spend your time on work that actually requires judgment.

None of that is in dispute. The question is about how you engage with these tools. There’s a real difference between using AI to pressure-test your thinking and using it to avoid thinking altogether. One makes you better. The other doesn’t.

A useful comparison: think about how GPS works in practice. If you pay attention while it guides you, noting the landmarks and the general direction, you build a mental map over time. If you follow turn-by-turn directions for years without ever looking up, you eventually can’t navigate without the app. Same tool, different outcomes depending on how you use it.

A Framework for Using AI Without Losing Your Edge

The people who will do well in an AI-saturated work environment won’t be the heaviest users or the holdouts. They’ll be the deliberate users.

Here’s a practical framework.

1. Think First, Then Augment

The single most useful habit: do your own thinking before engaging the AI.

- Form a hypothesis first. Before asking an AI to analyze something, take five minutes to write down what you think the answer might be and why. This forces you to do the initial synthesis work yourself. Use the AI afterward to challenge or extend your reasoning, not to generate it from scratch.

- Write before you delegate. For anything you’re writing, get a rough outline or a messy draft down on your own first. The cognitive value is in the structuring and argumentation. The polish is where AI helps.

- Try the “explain it back” test. After AI helps with research or analysis, explain the conclusions to someone without looking at the output. If you can’t do it, you didn’t learn anything. You just passed information through.

2. Design Your Workflow Around What Matters

Not every task benefits from AI, and some benefit from doing them the hard way.

- Keep some work AI-free. Strategic planning, creative problem-solving, and complex judgment calls are tasks where the effort is the point. Using AI on these doesn’t just save time; it removes the part that develops your skill.

- On high-stakes work, let AI accelerate but not originate. For strategy documents, client recommendations, and key decisions, use AI to speed up your research and sharpen your writing. But the reasoning should be yours. That’s what you’re being paid for.

- Let AI handle the routine. Summarizing notes, cleaning up formatting, drafting standard emails: these are fine to hand off. Free up your attention for the work that actually requires and develops your judgment.

3. Train Your Critical Thinking

Critical thinking atrophies without use, like any skill.

- Question AI outputs. Get in the habit of asking: what assumptions does this rest on? What’s left out? How would this change if the inputs were different? Every AI interaction can be a small critical thinking exercise if you treat it that way.

- Work through problems without AI sometimes. Not because it’s more efficient, but because it keeps your reasoning sharp. It’s the cognitive equivalent of choosing the stairs over the elevator.

- Seek out friction on purpose. Not everything needs to be optimized. Some of the most useful thinking happens when you’re stuck, working through something complicated without a shortcut. Read long-form pieces. Have the debate with a colleague before you ask the chatbot. Give your brain the chance to do the work.

- Vary your inputs. If every analysis you do runs through the same AI tool, your thinking starts to converge on one pattern. Go to primary sources. Look for perspectives the AI wouldn’t surface on its own.

Where This Is Heading

AI tools will keep getting better and more embedded in how we work. The pull toward leaning on them for the hard cognitive work will only increase.

People who navigate this well won’t be the ones who refuse AI. But they also won’t be the ones who hand their thinking over to it.

The differentiator will be intentionality: knowing when you’re actually thinking versus when you’re letting a tool think for you, and choosing to do the harder thing when the stakes warrant it.

These tools can make you sharper or make you lazier.

It depends on how you use them.

You May Also Like:

- Your AI Isn’t a Tool. It’s an Intern.

- [Webinar] What and How? Developing Your Institution’s AI Action Plan

- From Anxiety to Action: Your 90-Day AI Upskilling Roadmap

Originally posted by Alpha Hamadou Ibrahim on LinkedIn. Be sure to follow him there to catch all his great industry insights.

Categories

Share Article:

Other Posts From this Author:

© Copyright 2026, The Tambellini Group. All Rights Reserved.